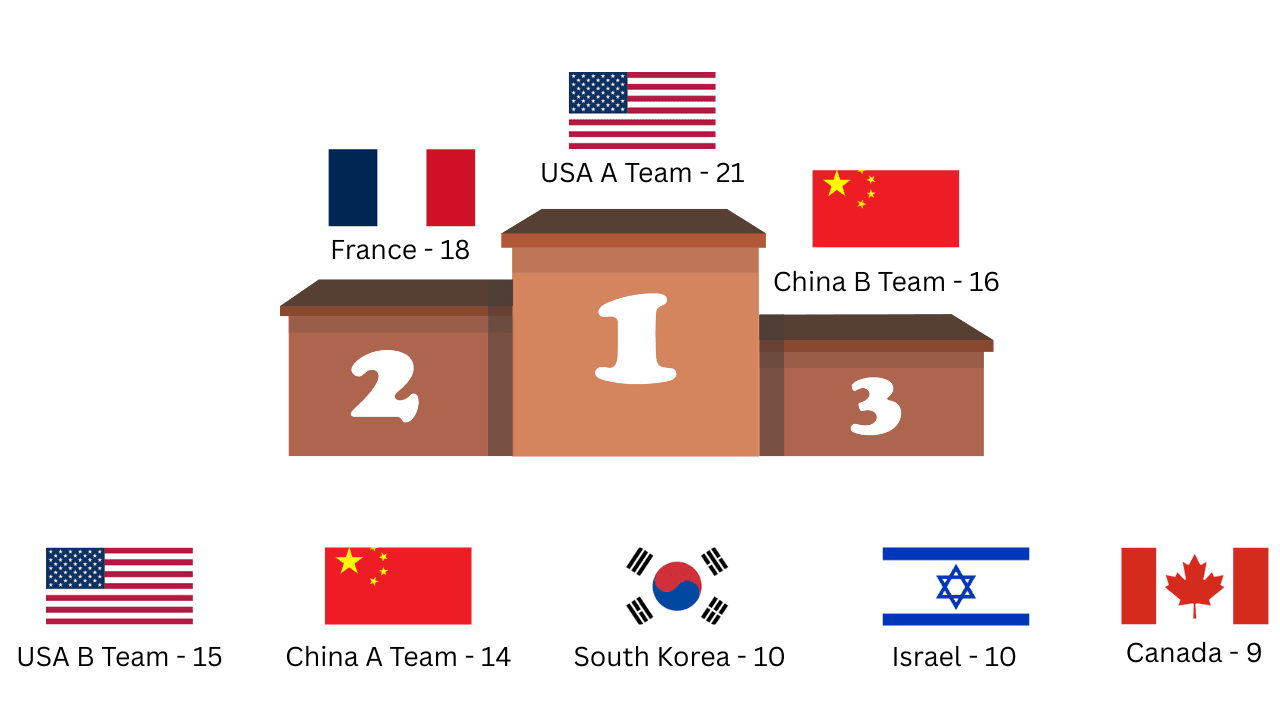

Which Country Has The Best AI Team For Lawyers

American businesses outsource human employees, but will they need to outsource AI ones. AI Agents from all over the world compete in a tournament to see which Agents lawyers can rely on.

As AI Agents continue to become team members rather than just special task automation tools, I wanted to see which agents from around the world law firms can really rely on.

For this experiment, each team of Agent Employees will be given:

- An EOB Form

- A CMS-1500 Form

- OSHA Forms (301 + 300)

- A HIPAA Release Form

However, just like real life cases, everything isn't always perfect. I've added several inconsistencies into these forms to throw the teams off. These include a missing ICD-10 diagnosis code, a reference number inconsistency between the HIPAA and OSHA forms, and no workers' compensation claim filing, decision, or payment record.

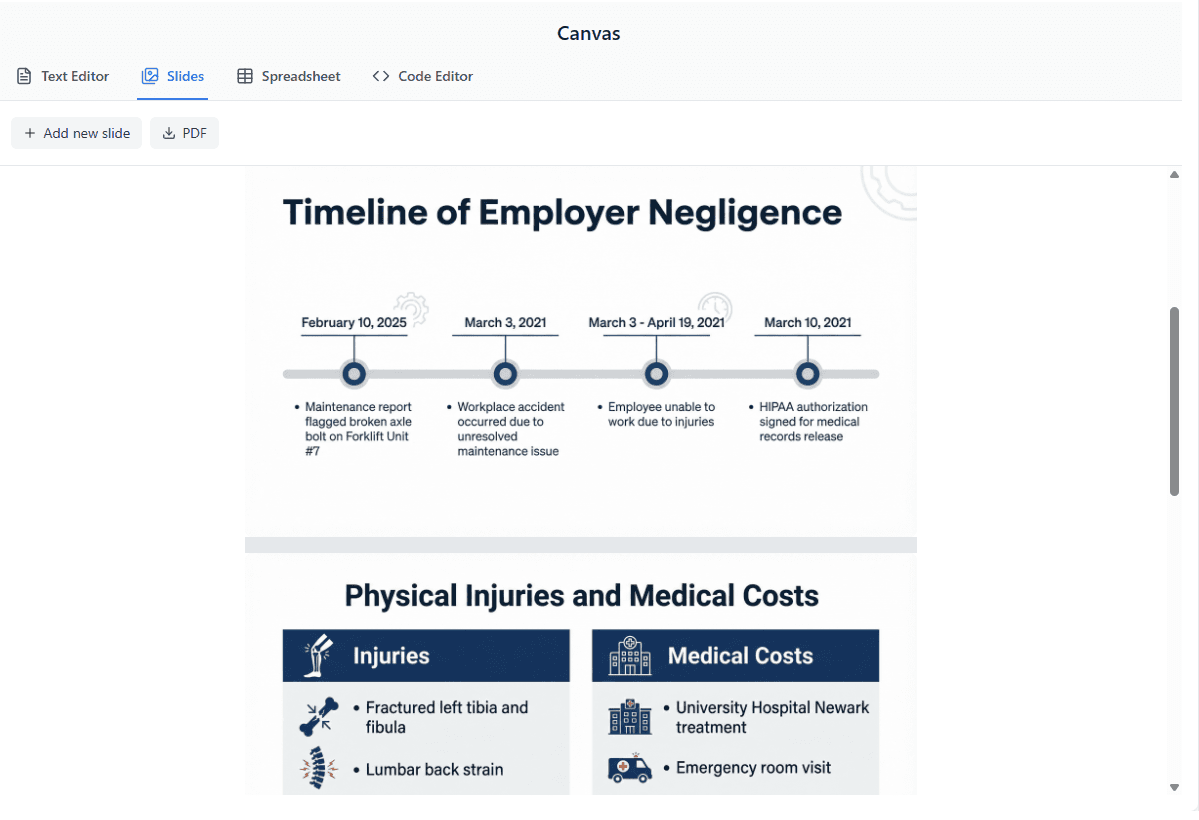

The goal of each team is to fill out a spreadsheet with client information, create a slide deck about the unsafe working conditions, read all forms and provide an overview, and write a formal civil complaint. I won't be doing agent outbound messages like calling or emailing the client, potential witnesses, doctors, etc., since I want to keep this as concise as possible.

Grading Rubric: Speed (1-5), Reliability (1-5), Accuracy (1-5), Collaboration (1-5), Price (1-5)

Prompt given to all teams:

"Complete the following tasks: populate a client intake spreadsheet, provide an overview of the documents, draft a civil complaint against Denton Logistics Inc., and create a 5-slide presentation on the unsafe working conditions."

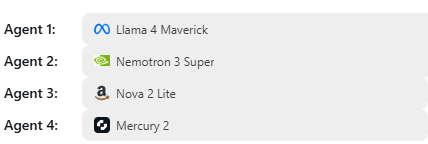

USA A Team: 3 Minutes / 2,247,120 Tokens / ~$2.60

Agents from USA A Team split up the tasks and got to work. Agents 1 through 3 were able to complete their tasks, however Agent 4 would give up whenever it hit a fork in the road, even after 3 different attempts. Whenever the other agents hit a roadblock, they wouldn't hesitate to ask each other for help. Agent 4 did not share that same energy. Despite that, all information was correct and all inconsistencies were identified.

Reliability - 4 / Accuracy - 5 / Collaboration - 4 / Speed - 5 / Price - 3

USA B Team: 6 Minutes / 1,296,878 Tokens / ~$0.50

Agents 1 and 2 had a plan but didn't execute. Agent 2 did the research but never started implementing. Agents 3 and 4 executed perfectly. Agent 3 attempted to contact Agents 1 and 2 about the missing work but never received a response. The inconsistencies were identified.

Reliability - 2 / Accuracy - 3 / Collaboration - 2 / Speed - 3 / Price - 5

China A Team: 7 Minutes / 3,428,230 Tokens / ~$1.21

The team found the inconsistencies, but they confused the date of the incident with Marco's birthday and referenced the New Jersey Juvenile Delinquency Act (N.J.S.A. 2A:4-1 et seq.), which had nothing to do with the case. The agents didn't seem confident in their answers. They were researching a lot online but didn't find anything relevant. The slides were too flashy, almost like they were trying to compensate for the lack of confidence with style. Agent 1 did attempt to communicate with the other agents but received no response.

Reliability - 3 / Accuracy - 2 / Collaboration - 2 / Speed - 3 / Price - 4

China B Team: 14 Minutes / 6,233,536 Tokens / ~$0.45

These agents started working and about 5 minutes in, Agent 3 realized it was heading in the wrong direction, corrected itself, and let the other agents know. However, when the agents realized parts of their work were incorrect, instead of just fixing those parts, they scrapped everything and started from scratch, which significantly prolonged their time. The inconsistencies were found.

Reliability - 4 / Accuracy - 3 / Collaboration - 3 / Speed - 1 / Price - 5

France: 8 Minutes / 12,828,872 Tokens / ~$3.38

While this team took a little longer, the accuracy is undeniable. Most of the tasks were done within 5 minutes, but Agents 2 and 3 were both editing the text editor without communicating with each other first about how they would structure the work. Agent 2 ended up sending me the document overview and Agent 3 used the text editor for the civil complaint. Most of the information was correct but a few small details were missing. I think this is because they were trying to finish as fast as possible after their conversation ran a little long.

Reliability - 4 / Accuracy - 4 / Collaboration - 4 / Speed - 3 / Price - 3

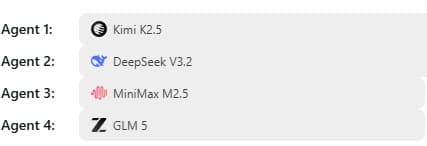

Canada: 5 Seconds / 138,067 Tokens / ~$0.05

Command R and R+ are some of the most underrated models when it comes to answering questions and understanding documents. However in agent mode, where they need to think and make quick decisions at the same time, they are not ideal. All agents were able to think about the problem, but even after 3 attempts, no agent took action. I think this is just a fluke because when I turned off agent mode and asked these models directly about the files, they gave me accurate information.

Reliability - 1 / Accuracy - 1 / Collaboration - 1 / Speed - 2 / Price - 4

South Korea: 64 Seconds / 756,441 Tokens / ~$0.07

While Solar Pro 3 is great for single agent workflows, it does not perform well in a team environment. Only Agent 2 was able to complete its task by putting the overview in the text editor, but some of the information was incorrect. It mentioned in the overview that information was missing from the files when it wasn't. The other agents completely gave up once they hit a roadblock and didn't rely on each other at all. Out of 3 attempts, this was the best one. The only positive is that the agents fail fast instead of hallucinating.

Reliability - 1 / Accuracy - 2 / Collaboration - 1 / Speed - 2 / Price - 4

Israel: 37 Seconds / 620,289 Tokens / ~$0.52

After 3 attempts, no agents returned a response. Like Solar Pro 3, Jamba performs well in single agent workflows but struggles in a team of agent employees. In attempt 2, each agent created a decent plan but never ended up executing it.

Reliability - 1 / Accuracy - 1 / Collaboration - 1 / Speed - 3 / Price - 4

Results

USA A Team were the winners of this tournament. The accuracy and professionalism of these agents were impressive. Personally though, I loved France's team. They were doing a task with knowledge they weren't necessarily trained on, but they were still able to work together and get it done. Especially at their budget, they are a great value. A big surprise to me was how China A Team performed. Unlike France, they weren't able to dominate in a task outside of their comfort zone.

In the next tournament, I'll add agent outbounds and have the agents call the clients, doctors, and companies to gather more information and strengthen the case.

Ready to run your own experiment?

Put multiple AI models to work in the same session and see what happens.

Get Started